Is there research to support curriculum mapping’s ability to generate positive-change results?

Curriculum Mapping Research

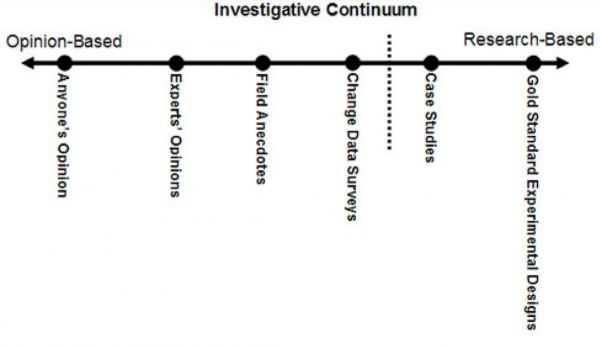

Generate positive-change results by using an opinion-based and

research-based investigative continuum

Curriculum Mapping Research

This question is often asked. What makes an answer difficult is one’s definition of results. While one may define results as a steady rise in test scores; another may consider results as improved alignment among standards, content, skills, assessments, and instruction; while another may perceive results as collegial dialogue and decision-making that enhance student learning within and among grade levels, departments, or programs.

As you explore curriculum mapping with a research lens, the graphic and explanations provide you with insights into the continuum from opinion-based to research-based sources.

On the Far Left…

Investigative Continuum lives Anyone’s Opinion wherein results, or perception of results, are expressed by anyone who may or may not have first-hand knowledge or experience in a given field, in this case: curriculum mapping. I have read editorials in newspapers, magazines articles, and blog posts wherein I can tell the author(s) does not have a background in (or understanding of) curriculum mapping, standards-based curriculum, or other related aspects, nor any experience in implementation.

Moving to the Right…

The next stop on the continuum represents the Experts’ Opinion wherein an author or authors have first-hand experiences that often involve various viewpoints and perspectives over a period of years in a particular field of study. The expert or experts synthesize experiences, viewpoints, and perspectives to establish, or add to, effective practices and positive results. An expert is recognized in his or her field, and continues to move the field of study forward as part of its natural advancement and evolution through his or her professional practice, as well as those of fellow practitioners.

Field Anecdotes are narratives from a person or persons who experience first-hand one or more aspects of a given field of study and provide an analysis or evaluation based on personal perceptions, attitudes, and beliefs, and how these three perspectives positively impact student (or educator) learning, as well as improve instruction and assessment practices.

Change Data Surveys represent individual or group report findings regarding positive, measurable change. These changes often focus on connections among student learning, achievement, and satisfaction; instructional pedagogies; assessment purposes and practices; or the combination of one or more of the aforementioned. Positive-change results may be immediate or measured over time. Qualitative data is often included, such as interviews, surveys, and observations (e.g., walk-throughs, recorded coaching/feedback sessions).

Case Studies move the investigative continuum across the “dotted line,” which symbolizes crossing over toward information and data that is closer to gold-standard research results. These studies include well-planned out and executed research that often becomes a doctoral thesis or quantitative study. While this investigative genre represents good data, it is not considered to be gold-standard research due to non-use of control group(s) and the potential for not having a large enough sample.

While Gold Standard Experimental Design is considered truly research-based, it is problematic when considering the required guidelines, for example, the Institute for Education Sciences (IES) that was predominant in the No Child Left Behind (NCLB) era. Dick Dalton, a special-education teacher and parent, posted the following commentary on his web site, The Life That Chose Me, during this timeframe. While he voices “anyone’s opinion,” he does convey well why it is difficult to ask that gold-standard research be conducted in any social science environment, which includes curriculum mapping:

The Use of Educational Research…

Under the best of conditions, educational research is difficult. The randomized group designs advocated by NCLB as the gold standard have their roots in the methods we use when testing the yield of various agricultural crops or the performance of animals. For instance, if I want to test the effectiveness of weed control measures, I randomly assign different plots of crops to the experimental or control conditions….The crops are monitored and observations are made throughout the growing season and a person might be able to see the result visually if the results are remarkable enough. But the telling evidence is in the yield, when the crops are harvested. If there is a significant difference in yield in all the experimental plots as opposed to the control plots, then we might attribute it towards the independent variable, which in this case is weed control.

The problem with using this method of research on students is that they are not plants, which are relatively easy to control. Plants don’t ride home on a bus at the end of the day entering a myriad of different environments that can affect educational performance….Another problem, and this is even more critical, is that the random assignment of students only yields results of sufficient statistical power if the groups are large. This is fine in a wheat field where one plant is pretty much like another. Each wheat plant only represents a handful of grain. But each individual student represents a life span much longer than that of any agricultural commodity and a potential resource to a family, neighborhood or community much greater than an entire field of wheat. With large groups, statistical significance is measured only in terms of the aggregate as if each student is a data point and might as well be a bushel of grain.

I’ll give one more flaw to this methodology, which is one of ethical consideration. Supposing I study a group of 200 students who are behind in reading, comparing some type of new reading instruction designed accelerate the reading abilities of fifth graders. Students are randomly assigned to two different groups. One group of 100 receives the new type of instruction by specially trained teachers. The other group is the control group and receives whatever instruction is regularly given. At the end of my study, I discover that my experimental group increased their reading ability by an entire standard deviation over the control group. Woo-hoo! High fives all around! Right? Well, yes. And no. What happens to those 100 students randomly assigned to the control group as they head off to middle school?

Mr. Dalton makes a critical point. Education is not conducive to control-group environments. We are dealing with lives that have been entrusted into our care and we want all students to have equal access to success and meaningful learning experiences. To employ an experimental control-group design to measure positive results is not in students’ best interests.

Therefore, gaining meaningful insights into curriculum mapping’s ability to generate positive-change results is best accomplished by reading, listening, networking, and considering what experts’ opinions, field anecdotes, change-data surveys, and case studies convey historically, as well as currently. While curriculum mapping may not have gold-standard studies available, there is an abundance of evidence-based sources that emphasize the critical role curriculum mapping, curriculum design, and informed collaborative decision-making plays in positively impacting learning, assessments, and instructional practices.

In conclusion, it is important to remember that curriculum mapping involves a second-order change, which I address in my Curriculum Mapping Basics. I recommend networking with schools, districts, and universities that can share how they have slowly and steadily developed positive impacts related to curriculum and instruction that empowers students and teachers as engaged learners through curriculum mapping. If you would like to network with a learning organization, please contact me and I attempt to get you connected with a similar learning organization.

Investigative Continuum – Sample of Sources

Experts’ Opinions

Curriculum Mapping

Hale, J. A. (2008). A guide to curriculum mapping: Planning, implementing, and sustaining the process. Thousand Oaks, CA: Corwin Press.

Hale, J. A. & Dunlap, R. E. (2010). An educational leader’s guide to curriculum mapping: Creating and sustaining collaborative cultures. Thousand Oaks, CA: Corwin Press.

Jacobs, H. H. (1997). Mapping the big picture: Integrating curriculum and assessment K-12. Alexandria, VA: ASCD.

Jacobs, H .H. (2004). Getting results with curriculum mapping. Alexandria, VA: ASCD.

Curriculum Design

Ainsworth, L. (2014). Rigorous curriculum design: How to create curricular units of study that align standards, instruction, and assessment. Englewood, CO: Lead+Learn Press.

Erickson, H. L. (2007). Concept-based curriculum and instruction for a thinking classroom. Thousand Oaks, CA: Corwin Press. Wiggins, G., & McTighe, J. (2011). The Understanding by design guide to advanced concepts in creating and reviewing units. Alexandria, VA: ASCD

Wiggins, G., & McTighe, J. (2011). The Understanding by design guide to creating high-quality units. Alexandria, VA: ASCD

Wiggins, G., & McTighe, J. (2015). Solving 25 problems in unit design: How do I refine my units to enhance student learning? Alexandria, VA: ASCD Arias.

Professional Learning Communities

DuFour, R., DuFour R., Eaker, R., & Many, T. (2010). Learning by doing: A handbook for professional communities at work – A practical guide for PLC teams and leadership. Bloomington, IN: Solution Tree.

DuFour, R. & Reason, C. (2015). Professional learning communities at work and virtual collaboration: On the tipping point of transformation. Bloomington, IN: Solution Tree.

Field Anecdotes

Curriculum Mapping

Mills, M.S. (2003). “Curriculum Mapping as Professional Development: Using Maps to Jump-Start Collaboration.” Curriculum Technology. Volume 12. Number 3.

Contact Janet

Fill out the form below to contact Janet